AFM Tip-Cell Segmentation Pipeline

Automated segmentation pipeline for AFM T-cell images — fine-tuned Cellpose with probe-aware selection logic, achieving 0.889 mean Dice across 216 labeled frames with zero empty outputs.

Solo Researcher (Undergraduate) · Fall 2025 · Team of 1

Contributions

Fine-tuned Cellpose on AFM T-cell images to meet a no-empty-output requirement across 8 experimental subsets

▾Result

Global mean Dice 0.889, global mean IoU 0.813, pred-empty frames 0/216. 7 of 8 subsets cluster at Dice 0.88–0.91.

Designed probe-aware selection logic to enforce domain-specific constraints and eliminate wrong-cell picks in crowded frames

▾Result

Probe-aware logic eliminated wrong-cell picks that baseline largest-cell selection failed on across crowded DN2–DN4 frames. Zero pred-empty frames. All fallback-triggered frames are flagged and logged.

Built a structured verification framework with per-frame QC artifacts, failure logging, and subset-level acceptance metrics

▾Result

100% of frames produce reviewable overlay and mask. Worst-scoring stems identifiable from aggregated logs without re-running inference. DN2-rate outlier identified and root cause documented.

Interfaces

| From | To | Type | Description |

|---|---|---|---|

| Raw AFM frame (.tif) | Preprocessing module | data | Grayscale load + percentile [2,98] intensity normalization; optional contrast normalization and morphological closing per subset config |

| Preprocessing module | Cellpose model | data | Full AFM frame passed without cropping; per-subset cellprob_threshold and flow_threshold overrides applied at inference time |

| Cellpose model | Probe-aware selection logic | software | Multi-instance integer label map passed to geometry rule; probe coordinates sourced from auto-detector or PROBE_MAP fallback |

| Probe-aware selection logic | QC artifact writer | software | Single binary tip-cell mask + selected instance metadata; path taken (normal, retry, fallback) logged per frame to JSON |

Documents

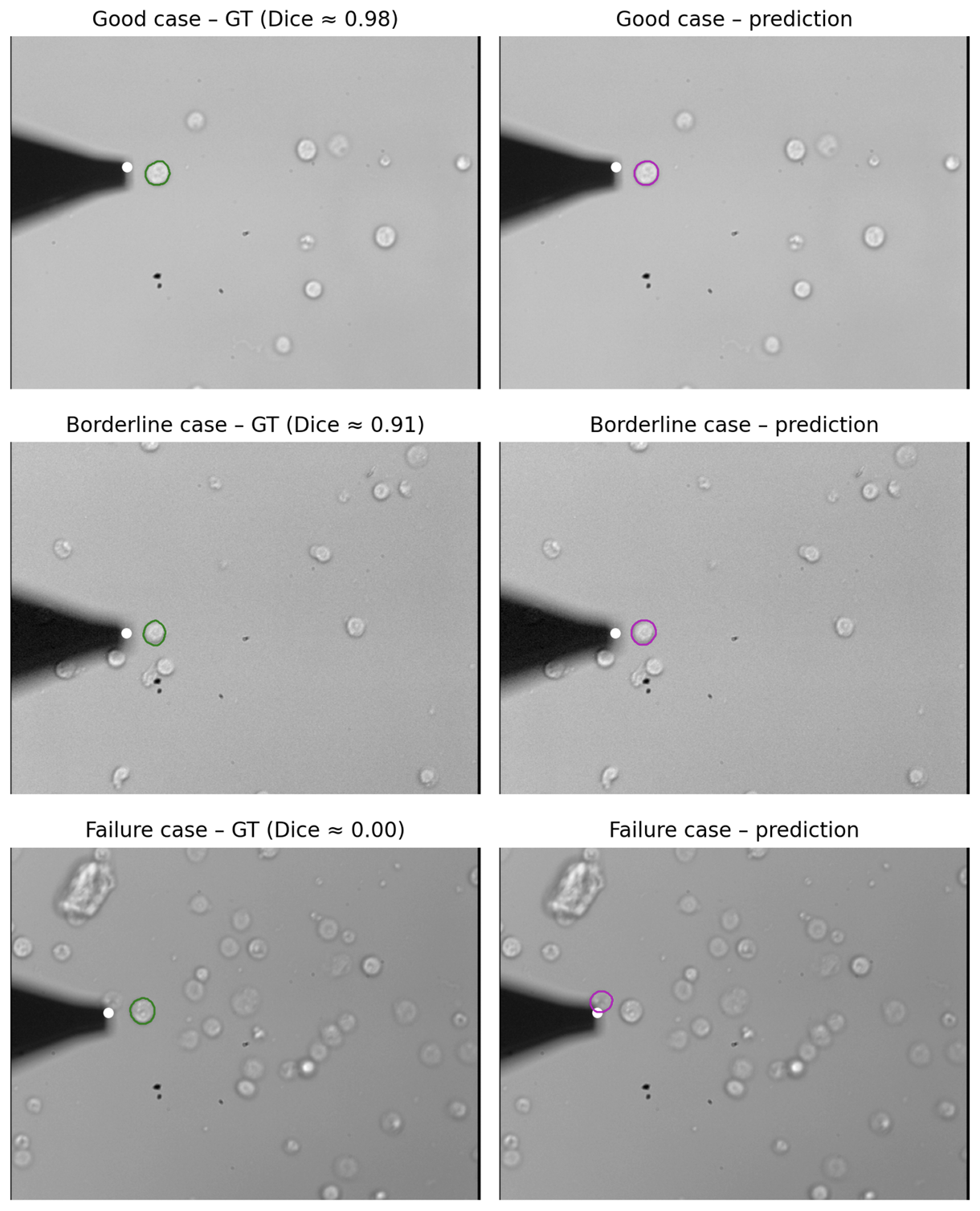

6-panel grid showing ground truth vs predicted masks across three qualitative performance classes

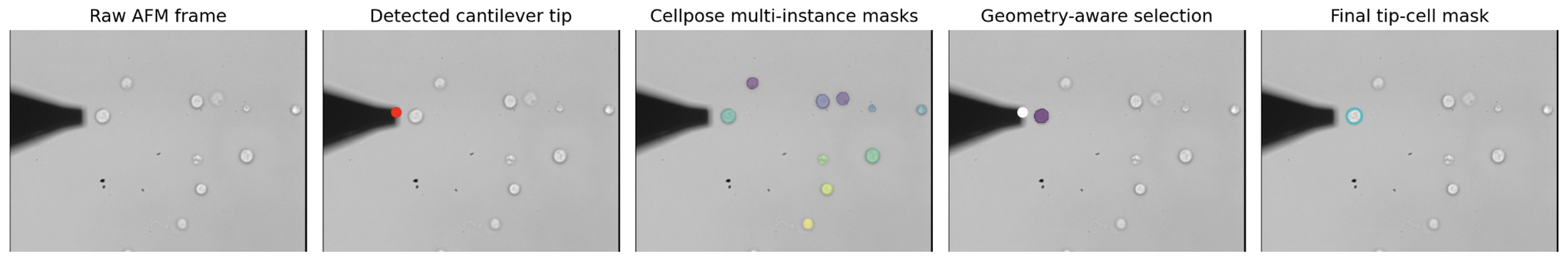

5-panel strip showing each stage: raw AFM frame → detected cantilever tip → Cellpose multi-instance masks → geometry-aware selection → final tip-cell mask

Limitations

- • DN2-rate is the weakest subset (Dice 0.816). A subset of frames contain faint cell rims or overlapping cells near the probe tip, causing the geometry rule to select the wrong cell. Resolving this requires geometry-aware multi-channel prediction at the model level, not post-processing improvements.

- • Thresholds are manually tuned per imaging subset. Generalization to a new donor, imaging session, or microscope would require full re-tuning. Domain-aware training is the correct long-term fix.

- • Auto tip detection relies on a static rightmost-point heuristic and assumes the cantilever is darker than the background. Performance is consistent for DN2–DN4 but degrades on DN1 and would fail if cantilever geometry changed significantly.

Lessons & Next Steps

- • The dominant failure mode was cell selection, not segmentation — the model segmented correctly but chose the wrong cell. Identifying this shifted the design direction from backbone improvement to geometry-aware post-processing.

- • Per-frame path logging (normal, retry, fallback) made failure patterns immediately diagnosable without re-running inference. Structured logging is what separates a debuggable pipeline from a black box.

- • Per-subset threshold overrides were effective but are a structural workaround — they compensate for a model with no domain understanding. The correct long-term architecture is domain conditioning or geometry-aware training, not additional manual knobs.